I'll be honest-when someone first told me about MPO connectors three years ago, I thought they were just another buzzword in a sea of networking acronyms. Then I actually saw one in action at a client's data center upgrade. Twelve fibers. One connector. That was it. That's when things clicked.

But here's where it gets interesting (and frankly, a bit messy). Not every enterprise needs the same MPO setup. I've seen companies dump ridiculous amounts of money into 48-fiber trunk cables when they were barely pushing 10G traffic. Meanwhile, other organizations are limping along with traditional LC connections, wondering why their rack space disappeared faster than their budget.

The Density Problem Nobody Talks About Enough

Most articles will give you the standard spiel about "high-density applications" and "future-proofing." Sure, that's part of it. But what they don't tell you is that density isn't just about fitting more stuff in less space-though that alone can save enterprises upwards of 40% in rack utilization based on what I've seen in hyperscale deployments.

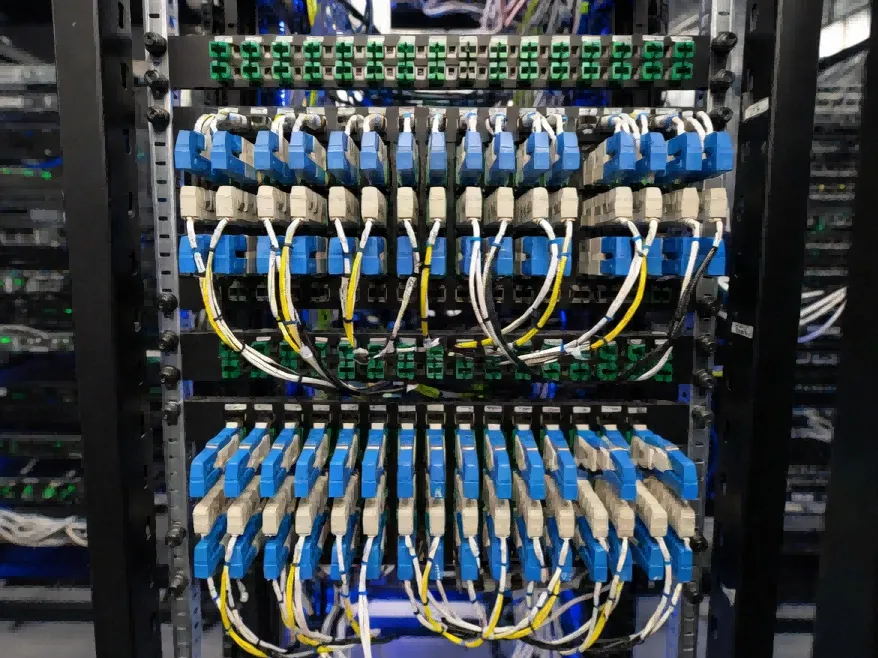

The real issue? Cable management nightmares. You know the ones I'm talking about. That rat's nest behind your server racks that nobody wants to touch because one wrong move and suddenly half the floor goes down. MPO solutions cut through that chaos because you're dealing with one trunk cable instead of twelve separate runs. Less to manage, less to break, less to curse at during 2 AM emergency maintenance.

I worked with a mid-sized financial services company last year-about 300 employees, three office locations. They were expanding their headquarters and needed to wire up two new floors. The network engineer was adamant about sticking with traditional duplex cabling because "that's what we know." Three months into the project, they were already over budget and dealing with airflow issues in their comms closets. The problem wasn't the connectors themselves; it was the sheer volume of copper and fiber creating heat pockets that their HVAC couldn't handle.

When 12-Fiber Makes Sense (And When It Doesn't)

Here's something that drives me crazy: everyone defaults to 12-fiber MPO assemblies like it's the only option. It's not. You've got 8-fiber, 16-fiber, 24-fiber, even 72-fiber configurations if you're really going wild.

For most enterprises-and I'm talking about your typical corporate environment with maybe 500-2000 employees-12-fiber MPO setups hit a sweet spot. You get enough capacity for 40G connections without overbuilding. The MTP-12 format works beautifully with QSFP+ transceivers, which most enterprise switches have been using since, what, 2015? Earlier?

But (and this is a big but) if you're still running predominantly 10G infrastructure with no concrete plans to upgrade in the next 18-24 months, MPO might be overkill. I know that sounds counterintuitive when everyone's preaching about scalability. Sometimes pragmatism beats future-proofing. An MTP-LC breakout cable can bridge that gap temporarily, letting you connect new 40G switches to legacy 10G equipment. Is it elegant? No. Does it work? Absolutely.

The 24-fiber variants are where things get interesting for larger deployments. Data centers love them because you can pack insane amounts of bandwidth into a single cable run-we're talking 100G, 400G, even 800G parallel optics configurations. But in a typical enterprise campus network? That's probably excessive unless you're dealing with serious east-west traffic between buildings or massive video surveillance systems. I've seen universities and large hospital complexes use 24-fiber MPO trunks for their backbone infrastructure, especially when they're aggregating traffic from multiple buildings into a central data closet.

The Cassette vs. Breakout Panel Debate

Nobody agrees on this, by the way. I've been in meetings where engineers nearly came to blows over cassette modules versus breakout patch panels.

MPO cassettes are these neat enclosed units-usually 1U or 2U-that let you plug an MPO trunk cable into the back and get individual LC connections on the front. They're plug-and-play, which sounds great until you realize you've locked yourself into a specific fiber count and polarity configuration. Want to change from Type A to Type B polarity? You're buying new cassettes. Need to scale up? More cassettes, more rack space.

Breakout patch panels offer more flexibility but require more planning upfront. You're essentially splitting that MPO trunk into individual fiber pairs, which gives you granular control over how you distribute connections. The tradeoff? More complexity during installation, more potential points of failure if you don't get the polarity right (and trust me, polarity mistakes are expensive to fix).

I've noticed smaller enterprises-maybe 200 employees or less-tend to prefer cassettes because they simplify the deployment. There's something to be said for reducing the number of decision points when your IT team is already stretched thin. Larger organizations with dedicated network engineering staff often go the breakout panel route because they value the flexibility and are comfortable managing the added complexity.

Polarity: The Thing That Will Make You Question Everything

Okay, this deserves its own section because polarity is where MPO deployments either soar or crash spectacularly.

There are three types: A, B, and C. Type A maintains straight-through fiber positions-fiber 1 goes to position 1 on the other end. Type B flips the array completely. Type C... honestly, Type C is weird and mostly used in specific trunk-to-trunk applications that most enterprises won't ever touch.

The industry standard for most data center and enterprise deployments has settled on Type B polarity with Method B cabling. Why? Because it naturally maintains proper transmit-to-receive alignment without requiring crossover connections at every point. But here's where it gets messy: if you buy pre-terminated trunk cables from different manufacturers, there's a non-zero chance they're using different polarity conventions even if they both claim "Type B compliance."

I learned this the hard way during a hospital network upgrade. We spec'd everything carefully, ordered from two different vendors to save money on different cable lengths. Installation day comes around, and nothing works. Zero link lights. After four hours of troubleshooting, we discovered one vendor's idea of Type B polarity didn't match the other's. The fibers were crossing in ways they shouldn't. We had to order replacement cables and reschedule the entire cutover. The kicker? Both vendors insisted they were following industry standards. They were-just slightly different interpretations of those standards.

My advice? Stick with one reputable manufacturer for your entire MPO deployment if possible. The cost savings from shopping around usually aren't worth the compatibility headaches.

Single-Mode vs. Multimode in Enterprise Settings

This should be straightforward, but it's not.

Multimode fiber-specifically OM3 and OM4-dominates enterprise MPO deployments. It's cheaper, works perfectly fine for distances under 300 meters (which covers most in-building applications), and plays nicely with the VCSEL-based transceivers that come standard in most enterprise networking gear. OM4 in particular has become the default choice because it supports 40G up to 400 meters and 100G up to 150 meters. For a corporate campus, that's more than sufficient.

Single-mode MPO solutions exist, and they're growing, but they're still relatively niche in enterprise environments. You see them in longer-distance applications-connecting buildings across a large campus, metro area network deployments, that sort of thing. The fiber itself is more expensive, the connectors require tighter tolerances (which translates to higher costs), and you need different transceiver optics. Unless you've got runs exceeding 500 meters or you're planning for some truly massive bandwidth scaling in the distant future, multimode makes more sense for most enterprises.

There's also this weird middle ground emerging with BiDi (bidirectional) MPO solutions using single-mode fiber. They're trying to push more bandwidth through fewer fiber pairs by using wavelength division multiplexing. It's clever technology, but the adoption in enterprise space has been... slow. Data centers are experimenting with it, telecom providers love it, but your average corporate IT department? They're sticking with tried-and-true multimode parallel optics.

The Pre-Terminated vs. Field-Terminated Decision

This one's actually pretty clear-cut: go pre-terminated unless you have a really, really good reason not to.

Field terminating MPO connectors is technically possible. Manufacturers make kits for it. But it's finicky work that requires specialized tools, a clean environment, and honestly more patience than most people have. The precision needed to align 12 or 24 fiber ends simultaneously in an MT ferrule is borderline ridiculous. I've watched trained technicians spend 45 minutes on a single connector, only to have it fail testing.

Pre-terminated assemblies come factory-made, tested, and certified with actual insertion loss and return loss numbers. Yes, you pay a premium. Yes, you need to plan your cable lengths more carefully upfront. But the time savings during installation and the reduced risk of deployment failures make it worthwhile for probably 95% of enterprise applications.

The exception? Really unique installation scenarios where you can't predict cable lengths accurately, or situations where running pre-terminated cables through existing conduit is impossible because the connectors won't fit. In those cases, you might need to pull raw fiber and terminate on-site. But even then, I'd look hard at whether you could use a different routing path that accommodates pre-terminated solutions.

Trunk Cable Configurations That Actually Matter

Here's a dirty little secret: most enterprises only need three or four different MPO trunk cable configurations to handle probably 80% of their cabling needs.

You've got your basic MPO-to-MPO trunk cables. These run between patch panels, cassettes, or directly between switches if you're feeling brave. Common lengths are 5, 10, 15, and 30 meters because those distances cover most rack-to-rack and closet-to-closet scenarios. Shorter lengths get annoying to manage with connector boot sizes, longer lengths are usually unnecessary in typical enterprise environments.

Then there are MPO-to-LC breakout cables-also called harness cables or fanout cables depending on who you ask. These are incredibly useful for transitioning between your high-density MPO infrastructure and individual server connections or legacy equipment that still uses LC ports. A 12-fiber MPO breaks out into six duplex LC connectors. These are your workhorses for connecting storage arrays, older generation switches, anything that predates the MPO standardization in enterprise gear.

Some deployments use MPO-to-MPO crossover trunks, but honestly? If you're designing your polarity correctly upfront, you rarely need dedicated crossover cables. That's more of a troubleshooting tool or a fix for polarity mistakes than a standard component.

Testing: The Unglamorous Part Nobody Wants To Do

You need to test MPO connections. Period. I don't care if they came pre-terminated from a reputable manufacturer with test reports in the box. Test them anyway.

The three critical tests are inspection, polarity verification, and insertion loss measurement. Inspection means actually looking at the fiber end-faces with a microscope-yes, all 12 or 24 of them. Contamination kills optical performance faster than anything else, and MPO connectors are contamination magnets because of their large ferrule surface area.

Polarity testing verifies that your fiber 1 actually connects to the right position on the other end. This sounds basic, but polarity mistakes are the number one cause of MPO link failures in my experience. There are specialized testers that can check all fiber positions simultaneously, which is way better than trying to verify each fiber pair manually with a light source and power meter.

Insertion loss testing measures actual optical performance. For enterprise multimode MPO connections, you're typically looking for less than 0.5 dB per mated connection pair, though the exact spec depends on your fiber type and connector grade. Anything over 0.75 dB should make you suspicious.

The problem? Good MPO test equipment is expensive. A decent microscope probe for MPO inspection runs $3,000-5,000. Automated polarity testers can push $10,000 or more. Small enterprises usually don't have this gear in-house, which means relying on your cabling contractor to do thorough testing. Make sure that's explicitly written into your contract with specific pass/fail criteria, because I've seen way too many installations where "testing" meant "we plugged it in and the link light came on."

When MPO Doesn't Make Sense (Yes, Really)

Let's talk about scenarios where MPO is actually the wrong choice.

Small branch offices with minimal IT infrastructure. If you've got a single 48-port switch and a handful of access points, spending money on MPO infrastructure is like buying a Ferrari to commute three miles to work. Stick with traditional LC duplex connections. They're cheaper, easier to troubleshoot, and your local IT person (who's probably also handling printer problems) doesn't need specialized knowledge.

Environments with constant reconfiguration needs. MPO shines in relatively static infrastructure-data center spine-leaf architectures, building backbone cabling, things that get installed once and modified rarely. But if you're in a creative agency or research lab where network topology changes monthly, the inflexibility of MPO trunks becomes a liability. You can't easily "move" a single fiber pair like you can with duplex cables.

Budget-constrained upgrades. MPO infrastructure costs more upfront. The cables cost more, the connectors cost more, the installation labor costs more (even with pre-terminated solutions), the test equipment costs more. If you're not actually utilizing the density or bandwidth advantages, you're paying a premium for capabilities you don't need. Sometimes boring old duplex LC cabling is the right answer.

The Real-World Enterprise Deployment Model

So what does a sensible MPO deployment actually look like for a typical enterprise?

Most organizations I work with use a hybrid approach. Their main data center or central network closet uses MPO infrastructure heavily-trunk cables between core switches, high-density patch panels for server connections, maybe some MPO cassettes for breakout to individual racks. This is where the density benefits really shine because you're aggregating traffic from across the organization.

Building backbone connections between IDFs (intermediate distribution frames) often use MPO as well, especially in multi-building campuses. A single 12-fiber or 24-fiber trunk can handle uplinks for multiple closets, dramatically simplifying inter-building cabling.

But then-and this is important-they transition to traditional LC duplex cables for the last hop to end devices. Access switches, wireless controllers, individual servers that aren't part of a high-density compute cluster. This last-mile connectivity is where LC still makes more sense for most organizations because of flexibility and cost considerations.

The result is a kind of hierarchical fiber architecture: MPO for aggregation and backbone, LC for distribution and access. It's not as elegant as going full MPO everywhere, but it's practical and cost-effective.

Manufacturing Quality: Why It Matters More Than You Think

Not all MPO connectors are created equal, and the quality differences are stark.

The MT ferrule-that rectangular piece that actually holds the fibers-requires incredibly tight manufacturing tolerances. We're talking micron-level precision in fiber positioning and ferrule end-face geometry. Cheap connectors might have fiber holes that are slightly off-center, or end-faces that aren't properly polished, or guide pin holes that don't align correctly. These tiny variations compound across multiple connection points and can absolutely wreck your optical budget.

Premium MPO connectors from manufacturers like US Conec (who actually trademarked the MTP brand), Corning, Senko-they consistently hit insertion loss numbers under 0.35 dB. Generic connectors from questionable suppliers? I've seen them exceed 1.0 dB right out of the box. In a multi-hop connection scenario, those losses add up fast.

The housing quality matters too. Better connectors use more robust plastics or metal components, they have better strain relief, the latch mechanism actually stays latched after 50 connection cycles instead of getting loose and floppy. These seem like minor details until you're troubleshooting intermittent connectivity issues caused by a connector that won't stay fully seated because the latch is worn out.

Jacket Types and Why Your Facilities Team Cares

This is going to sound boring, but it's actually important.

MPO trunk cables come in different jacket types: PVC (polyvinyl chloride), OFNP (plenum-rated), OFNR (riser-rated), LSZH (low smoke zero halogen). The difference matters for building codes and fire safety regulations.

In North America, if you're running cables through plenum spaces-those air return areas above drop ceilings or below raised floors-you legally need OFNP-rated cable. It's designed to not emit toxic fumes if it catches fire. Riser-rated cables (OFNR) are for vertical shafts between floors. Regular PVC-jacketed cables are only acceptable for horizontal runs in non-plenum spaces.

Your facilities manager will probably know this stuff better than your IT team, but it's worth checking during the planning phase. I've seen installations get halted mid-way through because the cabling contractor brought the wrong jacket type and the building inspector wouldn't sign off. Delays cost money.

LSZH cables are more common in Europe and other international markets due to different fire safety regulations, but they're gaining traction in North America too, especially in high-occupancy buildings. They cost a bit more than standard plenum cables but provide additional safety margin.

The Migration Path From Legacy Infrastructure

Most enterprises aren't building greenfield networks. You've got existing infrastructure-probably miles of duplex LC or SC fiber that's working fine. How do you migrate to MPO without forklift-upgrading everything?

The answer involves a lot of hybrid connectivity and patience.

Start by deploying MPO infrastructure in your most density-constrained areas first. Usually that's your main data center or primary network core. Use MTP-to-LC breakout cables to interface with your existing equipment. As switches reach end-of-life and get replaced with newer gear that has native MPO support, you gradually reduce your dependence on breakout solutions.

Backbone links between buildings or floors are often good candidates for early MPO adoption because they're relatively isolated from your edge infrastructure. You can swap out a handful of duplex LC uplink cables with a single MPO trunk without disrupting end-user connectivity.

The mistake I see organizations make is trying to do everything at once. They rip out perfectly functional cabling to install MPO infrastructure they don't yet have the equipment to fully utilize. Then they sit there with $100,000 worth of unused fiber capacity while their actual users are complaining about slower-than-expected network performance because the budget got blown on premature infrastructure upgrades instead of more access points or faster internet connectivity.

Incremental migration isn't sexy, but it's smart.

Vendor Lock-In Concerns

This is going to be controversial, but vendor diversity in MPO deployments is overrated.

I mentioned earlier the polarity compatibility issues between different manufacturers. But it goes beyond that. Fiber end-face geometry, ferrule specifications, housing tolerances-every manufacturer has their own interpretation of "standards-compliant" that might not play nicely with someone else's interpretation.

For critical infrastructure, standardizing on a single manufacturer's MPO components gives you predictable performance and simplified troubleshooting. Yes, you lose negotiating leverage for pricing. Yes, you're somewhat dependent on that vendor's product availability and support. But the operational benefits of knowing that all your connectors will mate properly and deliver consistent performance outweigh the supply chain risks for most enterprises.

The exception is if you're large enough to have dedicated network engineering staff who can manage multiple manufacturers' products and maintain detailed documentation of what goes where. Google can do that. Your average 500-person company probably shouldn't try.

The Future-Ish Trends To Watch

800G Ethernet standards are being finalized, and they're built around MPO-16 connectors instead of the traditional MPO-12 format. Does this matter for enterprises right now? Not really. Most organizations are still transitioning to 40G, some are pushing 100G in their cores, and 800G is solidly in the "maybe in five years" category.

But if you're planning a major infrastructure refresh in 2025-2026 and expect it to last a decade, it might be worth considering whether your conduit sizing and panel spacing can accommodate future MPO-16 deployments. The connectors are slightly larger, which affects panel density calculations.

There's also growing interest in MMC (Miniature Multi-Channel) connectors, which are even more compact than standard MPO. They're designed for ultra-high-density applications and use a push-pull latching mechanism that's easier to operate in tight spaces. Early adoption has been mostly in hyperscale data centers, but the technology could trickle down to enterprise use cases eventually.

Honestly though? For most enterprises, focusing on properly implementing current MPO-12 technology makes more sense than chasing emerging connector formats that may or may not gain widespread adoption.

At the end of the day, MPO fiber solutions suit enterprises that are dealing with real density challenges, planning for significant bandwidth growth, or managing multiple data closets that need robust interconnection. They're less useful for small organizations with simple networking needs or environments requiring constant physical reconfiguration.

The sweet spot is probably companies with 300+ employees, multiple buildings or floors, and networking infrastructure that gets refreshed on a 5-7 year cycle. That's where the cost-performance benefits actually materialize instead of remaining theoretical advantages in a vendor brochure.

But like everything in IT, "it depends" remains the most accurate answer to almost any question about whether MPO is right for your specific situation.